My name is Hao Shi (石昊), a Master’s student in the Department of Automation at Tsinghua University, in a joint program with MEGVII Research, advised by Prof. Gao Huang and Xiangyu Zhang, and I also work closely with Tiancai Wang.

My research focuses on Embodied AI, Robot Learning, VLA, and World Model, aiming to build foundation models for general robotic systems.

I am expected to join HKU MMLab as a Ph.D. student advised by Prof. Ping Luo in Fall 2026.

📝 Research

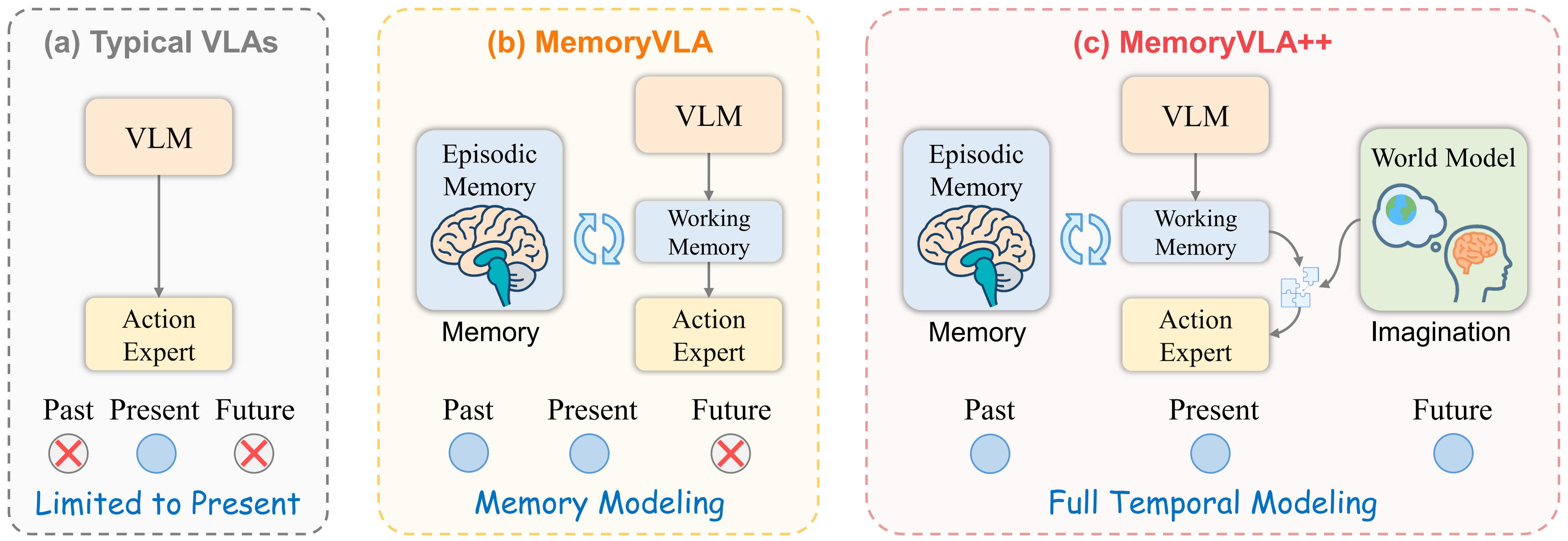

MemoryVLA++: Temporal Modeling via Memory and Imagination in Vision-Language-Action Models

Hao Shi, Weiye Li, Bin Xie, Yulin Wang, Renping Zhou, Tiancai Wang, Xiangyu Zhang, Ping Luo, Gao Huang✉

Under Review 2026 | Paper | Code | Homepage | Huggingface

- MemoryVLA++ is the extended journal version of MemoryVLA, advancing it from past-only memory modeling to full temporal modeling with both past memory and future imagination.

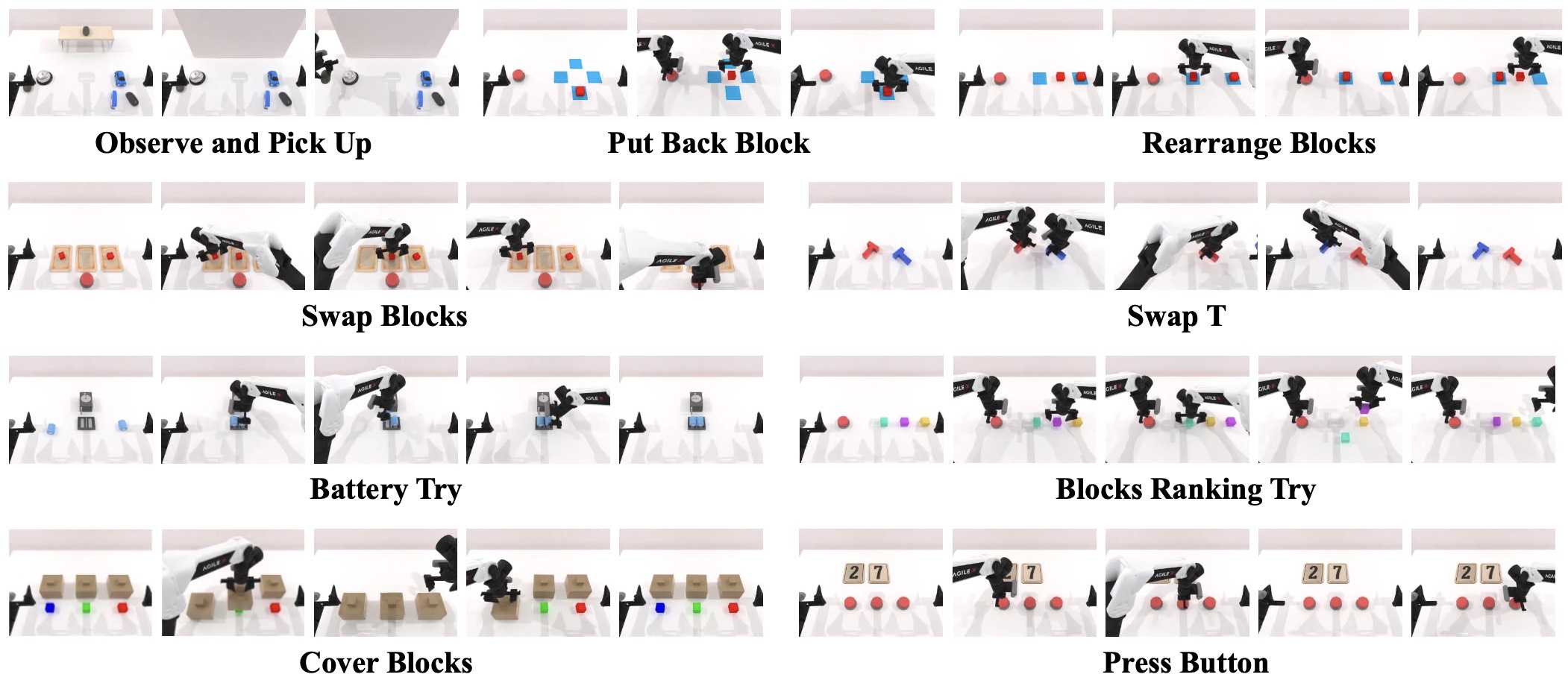

RMBench: Memory-Dependent Robotic Manipulation Benchmark with Insights into Policy Design

Tianxing Chen*, Yuran Wang*, Mingleyang Li*, Yan Qin*, Hao Shi, Zixuan Li, Yifan Hu, Yingsheng Zhang, Kaixuan Wang, Yue Chen, Hongcheng Wang, Renjing Xu, Ruihai Wu, Yao Mu, Yaodong Yang, Hao Dong✉, Ping Luo✉

Under Review 2026 | Paper | Code | Homepage | Huggingface

- RMBench is a memory-oriented benchmark built on the RoboTwin platform, and it also provides a memory-enhanced hierarchical VLA model, Mem-0.

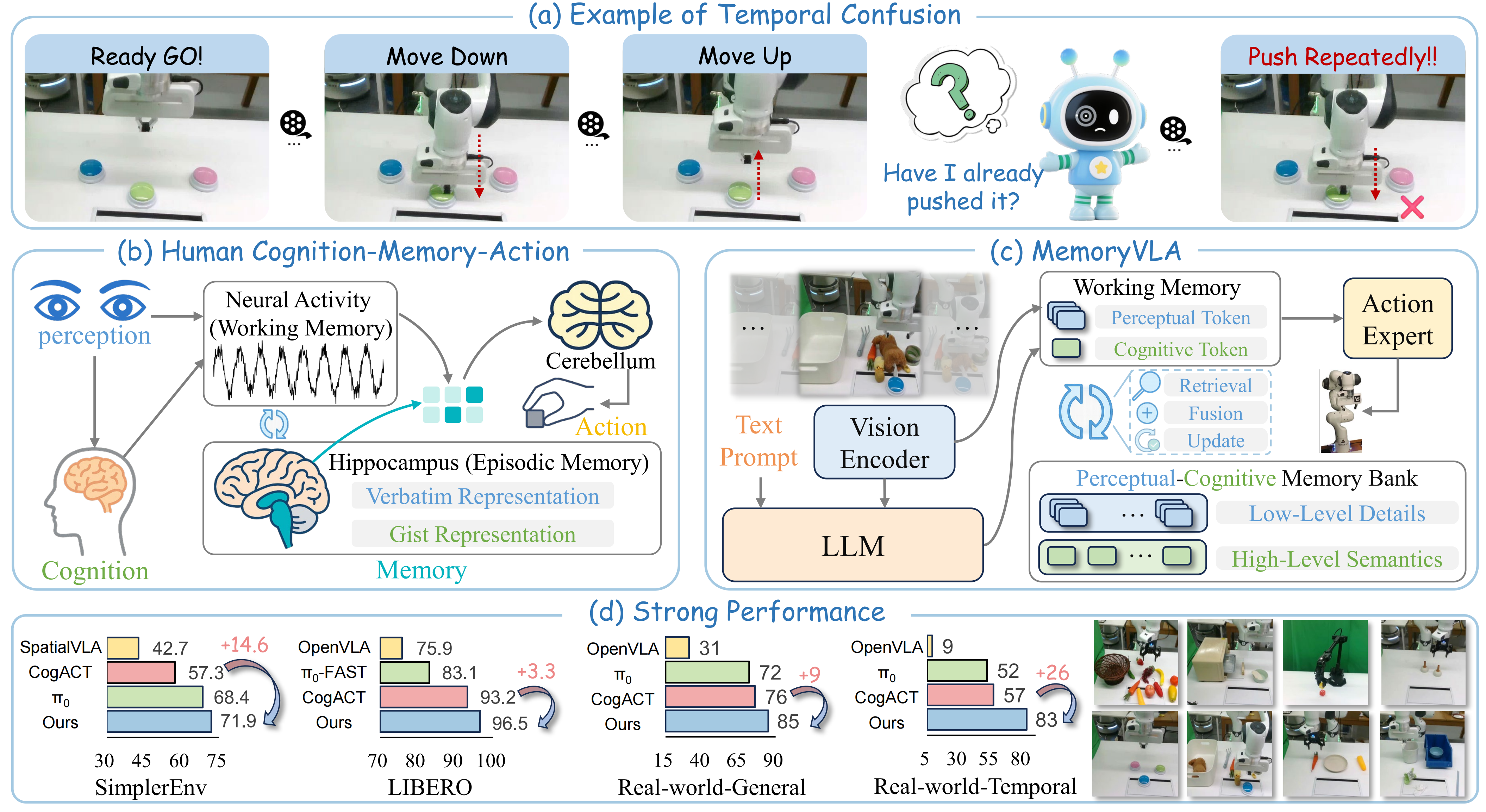

MemoryVLA: Perceptual-Cognitive Memory in Vision-Language-Action Models for Robotic Manipulation

Hao Shi, Bin Xie, Yingfei Liu, Lin Sun, Fengrong Liu, Tiancai Wang, Erjin Zhou, Haoqiang Fan, Xiangyu Zhang, Gao Huang✉

ICLR 2026 | CVPR 2026 Workshop Oral | Paper | Code | Homepage | Huggingface

- MemoryVLA is among the early works exploring memory in Vision-Language-Action models. Inspired by human memory systems, it builds a hippocampal-like memory to capture the temporal dependencies. It has since been cited over 100 times, including by Physical Intelligence.

SpatialActor: Exploring Disentangled Spatial Representations for Robust Robotic Manipulation

Hao Shi, Bin Xie, Yingfei Liu, Yang Yue, Tiancai Wang, Haoqiang Fan, Xiangyu Zhang, Gao Huang✉

AAAI 2026 Oral (Accept rate≈4%) | Paper | Code | Homepage | Huggingface

- SpatialActor is a disentangled spatial representations framework for robust robotic manipulation.

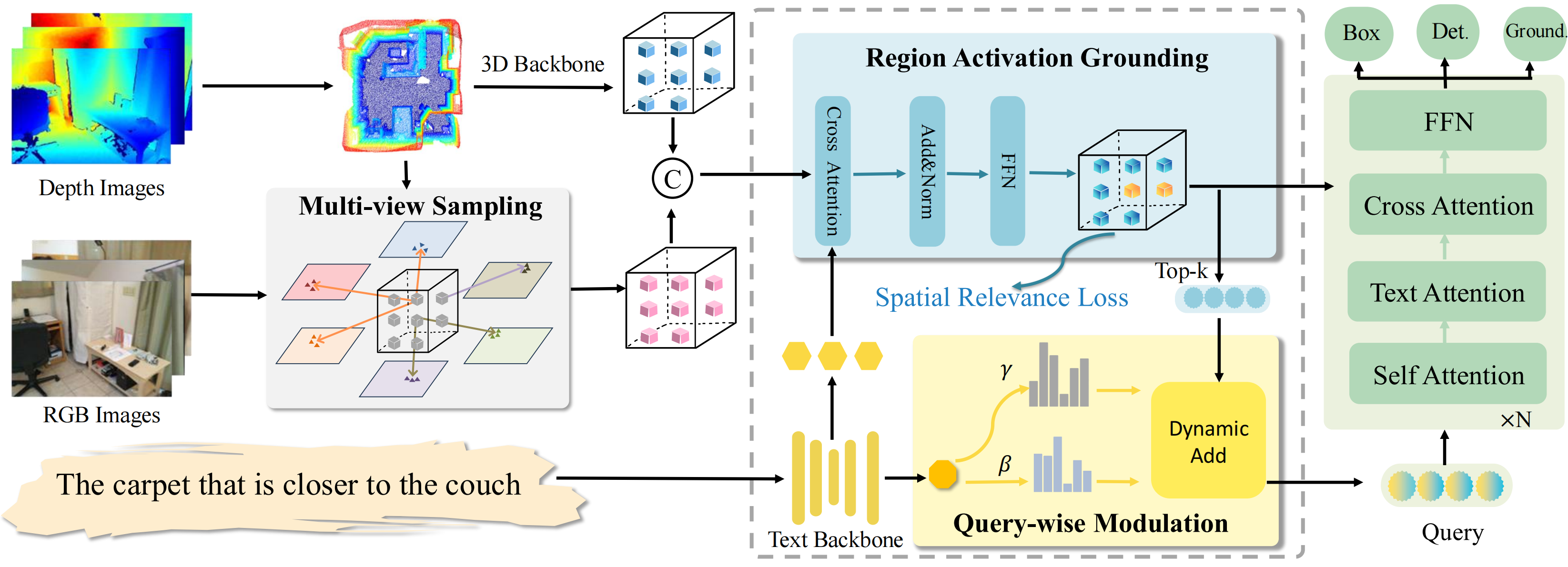

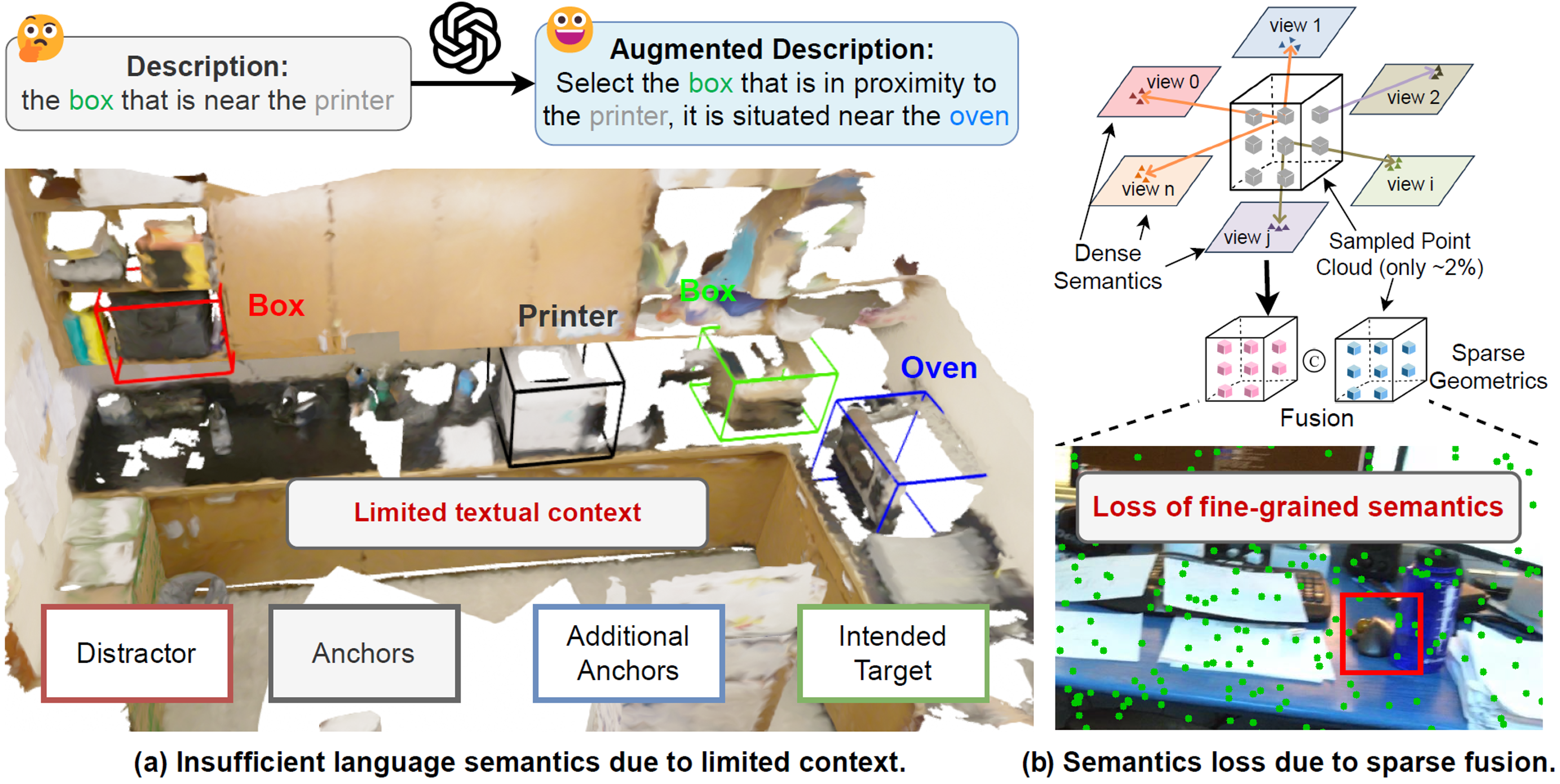

Grounding Beyond Detection: Enhancing Contextual Understanding in Embodied 3D Grounding

Yani Zhang*, Dongming Wu*, Hao Shi, Yingfei Liu, Tiancai Wang, Haoqiang Fan, Xingping Dong✉

CVPR 2026 Findings | Paper | Code

- DEGround is an embodied perception framework for 3D grounding, achieving 1st place on EmbodiedScan.

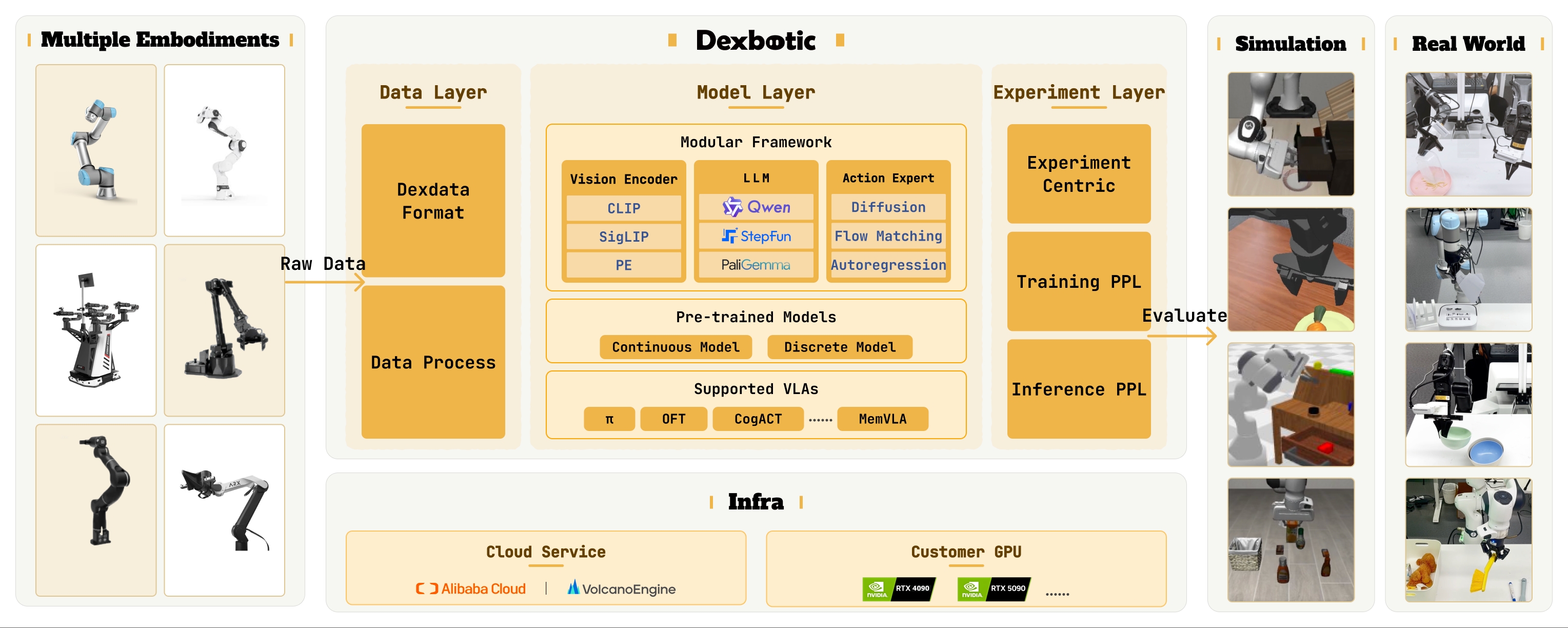

Dexbotic: Open-Source Vision-Language-Action Toolbox

Dexbotic Team

Technical Report 2025 | Paper | Code | Homepage | Huggingface

- Dexbotic is an open-source VLA codebase (similar to MMDetection). It unifies multiple mainstream VLA frameworks and benchmarks, provides strong pretrained models, and has garnered more than 1000 GitHub stars.

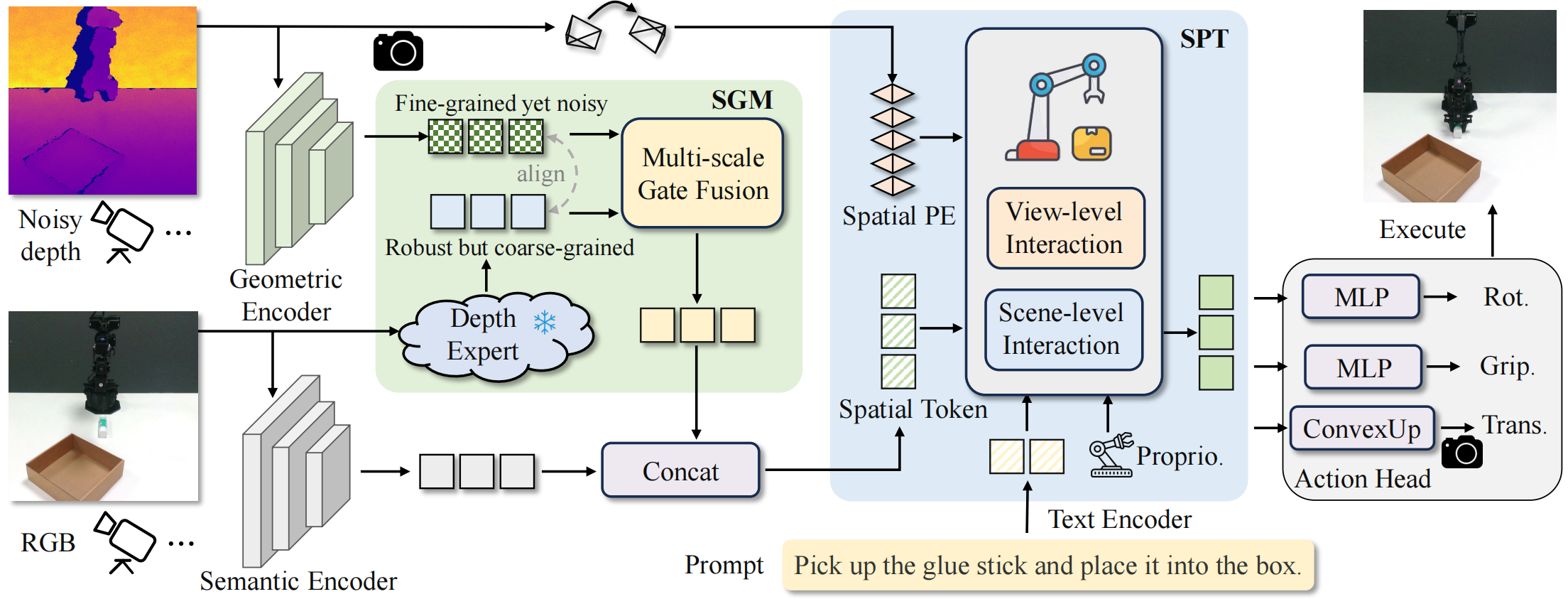

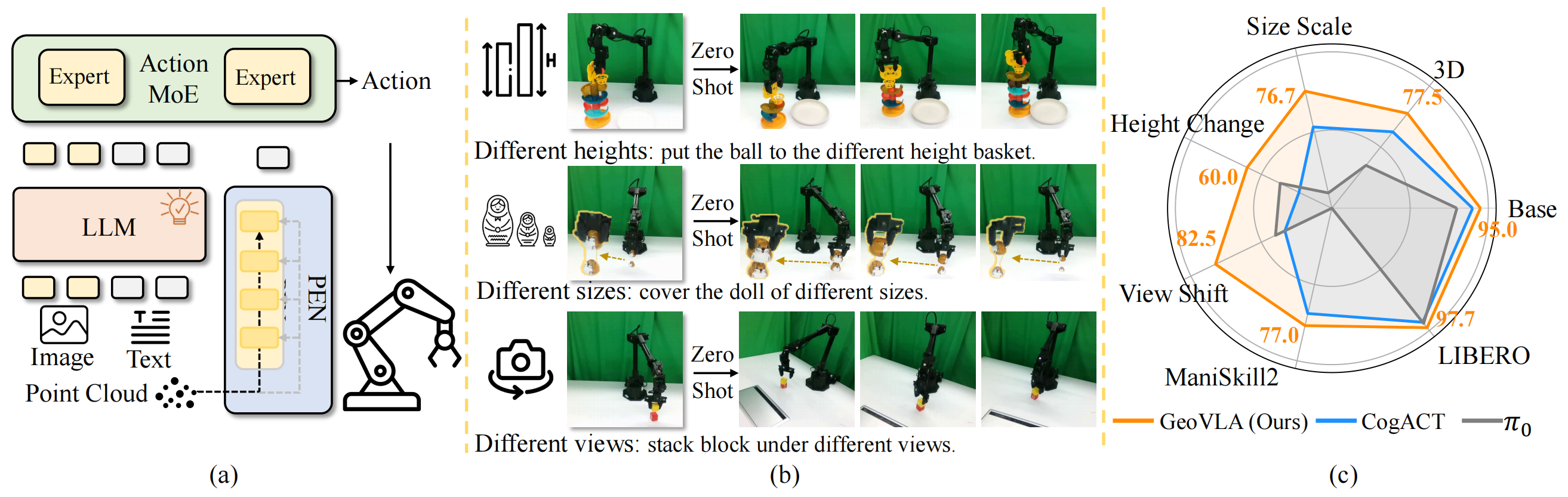

GeoVLA: Enpowering 3D Representations in Vision-Language-Action Models

Under Review 2025 | Paper | Code | Homepage

Lin Sun*, Bin Xie*, Yingfei Liu, Hao Shi, Tiancai Wang, Jiale Cao✉

- GeoVLA is a framework that bridges 2D semantics and 3D geometry for VLA, it achieves robustness across diverse camera views, object heights, and sizes.

DenseGrounding: Improving Dense Language-Vision Semantics for Ego-centric 3D Visual Grounding

Henry Zheng*, Hao Shi*, Qihang Peng, Yong Xien Chng, Rui Huang, Yepeng Weng, Zhongchao Shi, Gao Huang✉

*: equal contribution, ✉: corresponding author.

ICLR 2025 | CVPR 2024 Workshop Oral | Paper | Code

- DenseGrounding is an embodied perception framework for multi-view 3D visual grounding, which won the 1st Place and Innovation Award in CVPR 2024 Autonomous Grand Challenge ($9000, 1/154 submissions).

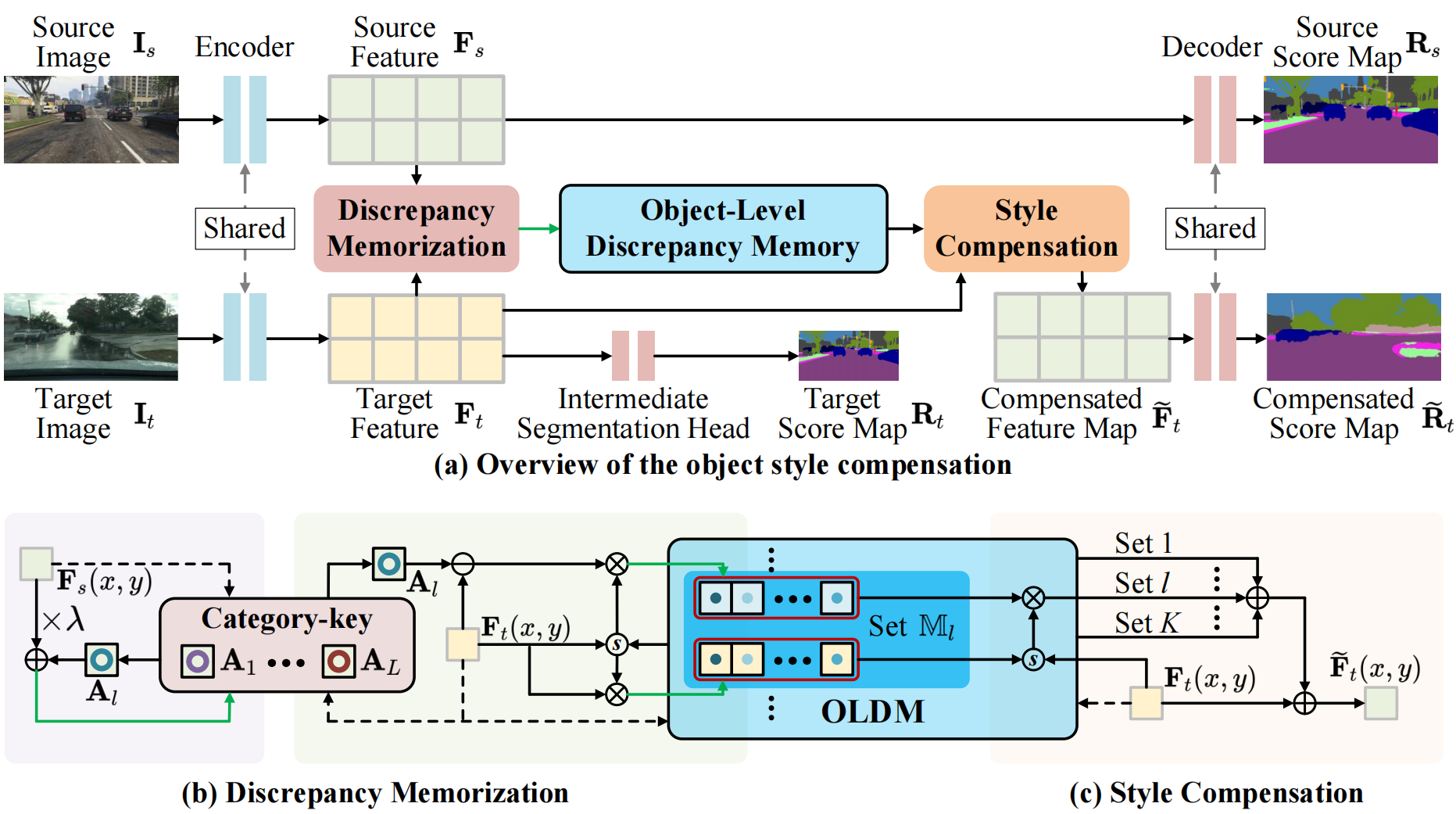

Open Compound Domain Adaptation with Object Style Compensation for Semantic Segmentation

Tingliang Feng*, Hao Shi*, Xueyang Liu, Wei Feng, Liang Wan, Yanlin Zhou, Di Lin✉

*: equal contribution, ✉: corresponding author.

- We propose a memory-bank-based object-style compensation method for open compound domain adaptation.

🎖 Honors and Awards

- 2026.05, Beijing Outstanding Graduate Award. (Only 1 Master in Dept. Automation, THU)

- 2026.05, ICML Gold Reviewer Award.

- 2026.01, Tsinghua Deng Feng Fund, Tsinghua University. (¥15000)

- 2025.11, Minghong Scholarship, Comprehensive Excellence 1st Prize, Tsinghua University. (Top 10% in THU, ¥10000)

- 2024.11, Philobiblion Scholarship, Comprehensive Excellence 1st Prize, Tsinghua University. (Top 10% in THU, ¥10000)

- 2024.06, 1st Place and Innovation Award in CVPR 2024 Autonomous Grand Challenge, Embodied 3D Grounding Track. (1/154 submission, $9000)

- 2023.11, CXMT Scholarship, Comprehensive Excellence 1st Prize, Tsinghua University. (Top 10% in THU, ¥10000)

- 2023.06, Outstanding Bachelor’s Thesis Award, Tianjin University.

- 2021.12, Huawei Intelligent Base Scholarship, Ministry of Education-Huawei Intelligent Base Future Stars.

📖 Education

M.Eng. @ LeapLab, Tsinghua University, Beijing.

Advisors: Prof. Gao Huang and Dr. Xiangyu Zhang

B.Eng. student in Materials Science, Tianjin University.

💻 Internship

Dexmal, Embodied Foundation Algorithm Group, Beijing

Mentors: Tiancai Wang, Yingfei Liu and Bin Xie

💬 Invited Talks

- 2026.06, invited talk about MemoryVLA, CVPR 2026 MARS Workshop, Denver

- 2026.01, invited talk about SpatialActor, AAAI 2026 Main Conference, Singapore

- 2025.09, invited talk about MemoryVLA, 3D视觉工坊, Online

- 2025.09, invited talk about MemoryVLA, 具身智能之心, Online

- 2024.06, invited talk about DenseGrounding, CVPR 2024 Workshop on Foundation Models for Autonomous Systems, Seattle

- 2024.06, invited talk about DenseGrounding, Technical Seminar on End-to-End Embodied Agent, Shanghai

🎓 Service

Reviewer / PC Member:

- ICLR 2026, ICLR 2025

- ICML 2026

- NeurIPS 2026

- CVPR 2026

- ICCV 2025

- AAAI 2026

- IROS 2026